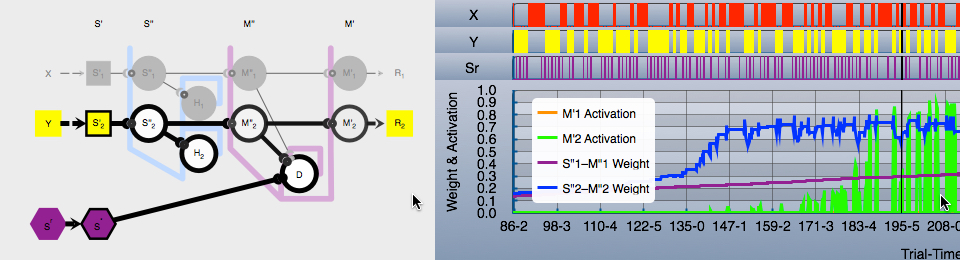

Arbitrary symbols in place of “words”.

Basics

David Cox recently gave an excellent webinar overview of how one might use traditional NLP in support of a behavior analyses of verbal behavior:

In it, David discussed turning text into numbers for more efficient processing. The particular format for this numeric representation is known as “bag-of-words”:

Until this morning, I thought that bag-of-words was invented by Gerard Salton as part of his vector space model (see section Software that implements the vector space model). However, the Wikipedia article says it was invented much earlier, 1954.

Bag-of-words is used frequently within the information retrieval and natural language processing domains. Essentially, it represents each word as a number, with an associated count of occurrences of the word in whatever the input text was. This is much more computationally efficient than handling text as such. It allows text to be referenced as a fixed-width value that is directly supported by computer CPUs. Whereas, processing text, even short words, is more expensive because they are variable length and require overhead for managing that length.

Many years ago at the search engine company I worked for, we did a quickie analysis of word length in an English language document collection that totaled in the gigabytes. The documents were all over the place in terms of content: financial news, general newspaper articles, literary works, encyclopedia articles, etc. Average word length: seven characters. An integer in most modern computers (including mobile devices) is 64 bits, that is, 8 bytes, that is eight “characters”. But, an integer is always the same length, and more importantly, is a type of data that is native to the CPU and which is handled much much more efficiently than are most data types. Thus, software that handles text as abstract symbols (that is, integers) will perform better than software that directly handles text.

A bag-of-words model represents words in two parts:

- Text collection: A vector (or dictionary) with a numeric integer index (or key) providing access to the original text.

- Bags-of-words, one for each unit of interest (sentence, paragraph, document, whatever). No text at all, just an integer for each word id (index or key) and another for the count of each word in the unit.

At this point, you can discard the text collection. It is not needed. It is never referenced during processing. Okay, don’t delete it. You will need it for human-readable output, but it is not needed for “real work”.

The above is the most basic process. But you might also want to also represent “phrases”, that is, tuples or n-grams (see Gensim phrase detection). Thus, you might first find pairs of words that occur together with some frequency, and treat the pairings as “words” in their own right. Same for triples and so on. But the idea is the same: add the phrases to the word dictionary as if they were words, and treat them as words in the bag-of-words. The result is that the NLP will treat the individual words forming the phrase separately, and in combination. Useful in cases where a particular “word” is essentially noise when it occurs alone, but is significant when it occurs with another particular word.

Text Processing Without Text?

This illustrates something that we all know, but rarely say, about the fundamental nature of words: they are meaningless in their own right. They only have meaning in relation to other phenomena. They only have “meaning” in their consistent associations as one type of stimulus event to other types of stimulus events (where “stimulus event” is anything that can happen under the laws of physics ruling our particular reality).

In the NLP processes we are taking about, the integer that serves as an id for a textual unit stands alone throughout processing. There is no reference to human textual forms, speech, fonts, character code points, none of that. The integer is what folks in some environment might call an abstract symbol. What some behavioral experiments might refer to a nonsense stimulus. However, it is the job of software such as Gensim to provide a type of “meaning” by clustering nonsense stimuli that occur in regular relations to one another.

Reminiscent of JEAB articles involving concept formation. “Topic” formation. Note that after Gensim is “trained”, it can be used to categorize/classify novel textual input. Compare to:

- Lubow, R.E (1974). High-Order Concept Formation in the Pigeon. Journal of the Experimental Analysis of Behavior, 21, 475-483.

In fact, Gensim does not care what is identified by the “word id”. It can be anything. In NLP we generally start with text.

But what is text? It is a set of code points that may (or may not) be displayed to humans using a system of associated glyphs we call fonts. Or the code points could be converted to speech by the iPhone in your pocket. But within much of the NLP world, once a chunk of text is converted to a “symbol”, the externalized visual or auditory outputs are irrelevant. the identified “symbol” can be images (as in a cluster of glyphs from the Times Roman font). Or it could be arbitrarily chosen line drawings. Could be parts in an automotive engineering diagram.

So there ya go: want to process arbitrary stimuli along with your text? Why not.

So What?

I think we too often attribute somewhat mystical properties to “words”. We sometimes forget that, as physical phenomena, they have no meaning. They only “mean” something in a behavioral context, just as is true for any other phenomena that may serve as part of a stimulus setting, as “discrete” antecedents, responses, or consequences. The same processes one would use to establish anything as a functional stimulus are required.