It has been over two years since the last post on this site. There has been progress, but not nearly as much as I had hoped. Turns out that there is a LOT to keep a retired person very busy.

Here is a summary of what has been done over the past couple of years, none published as of yet.

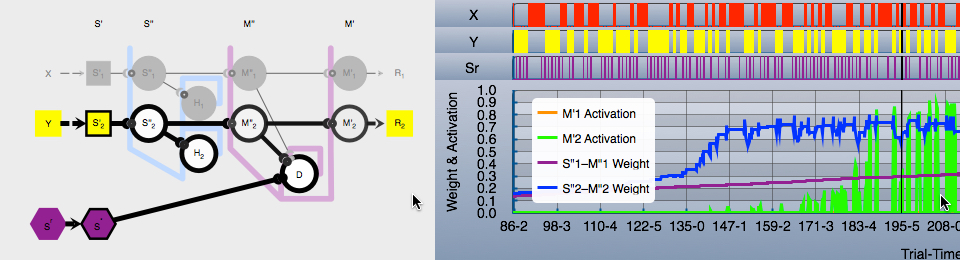

Progress on the “M” in “MVC”

Model-View-Controller (MVC) is a software architectural pattern that separates the main logic from presentation (i.e., View) and user input (i.e., Control). The model presents an Application Programmer Interface (API) that is used to implement the final application(s).

The published version (i.e., the BGL2015 Visualizer demo) served as a starting point, but the as yet unpublished code bears little resemblance to the demo. It has grown to include these modules:

- BAExperiment

- BASelectionistNeuralNetwork

- BAStateMachine

- BASQLite

- BADataModel

- BASimulationFoundation

BAExperiment

Framework for “experiments” consisting of any number of interacting entities. Includes basic data collection on each time step of the simulation.

Major elements include:

- Simulation: Top level container that essentially defines the outer bounds of the known world for the entities, and runs the simulations.

- Entities: Active elements in the simulation. Input is an environment containing state snapshots of all entities in the simulation. Output is a new state snapshot for each entity.

- Entity snapshot: State of an entity at the end of a time step (i.e., at the end of a simulation cycle). The state variables published in the snapshot are defined by the entity.

- Environment snapshot: Collection of snapshots at the end of a time step (cycle), one for each entity.

- The simulation ends when the entity designated as the controller says the session is over.

A cycle (i.e., time step) in the simulation is a fixed sequence of:

- Pass the current environment to each entity. The environment contains snapshots for the previous time step for all entities. These are the “stimuli”.

- Each entity generates a new snapshot based on the environment as it existed at the beginning of the cycle. These snapshots are collected into a new environment. These are the “responses”.

- The output (new) environment is recorded in the database.

That is: each cycle presents environmental stimuli to the entities. The environmental stimuli consist of all of the state at the end of the last cycle. Each entity generates responses to these stimuli, which make up the environment for the next cycle.

Each cycle defines a time quantum. A sequence of cycles define the stream of time for the simulation. Entities may only change state when presented with the environment and a new time stamp. Entities affect the world around them by setting state in the new snap shot they generate in response to the environment. All entities have the opportunity to observe (i.e., read) any state variable from any snapshot, their own included. Each entity may only change its own state recorded in its own newly generated snapshot. All outputs from one cycle are inputs to the next, in a continuous stream of environmental changes over time.

The two currently defined entities are:

- StateMachineEntity: Generally the controller for the simulation. Defines the experimental procedure (process).

- NeuralNetworkEntity: The “subject” of the experiment. Gets one update event per time step.

BASelectionistNeuralNetwork

Framework for defining and updating neural networks.

BAStateMachine

Framework for defining a stack-oriented specialization of a Harel State Chart. Supports states nested to any depth, and any number of concurrent states, along with signaling between states.

BASQLite

Partially completed Swift wrapper for SQLite. Includes foundation for multitasking SQLite requests, using Grand Central Dispatch (i.e., a private synchronous queue).

BADataModel

Framework for user defined data types, objects, and scripting. Ultimate usage is creation of each via a graphical UI without need for the Xcode development environment.

BASimulationFoundation

Framework of low level elements used throughout the simulation.

Progress on the “VC” in “MVC”

Partially completed neural network editor. Looks very much like the neural network display in the BGL2015 Visualizer demo.

This work has been on hold for months due to travel and familial health issues. I will resume it later this summer or early fall when Apple releases their new SwiftUI. I find GUI development tedious in the extreme. If SwiftUI lives up to the promise of the WWDC 2019 videos, it will be worth re-implementing using SwiftUI. It may permit me to toss out a lot of code.