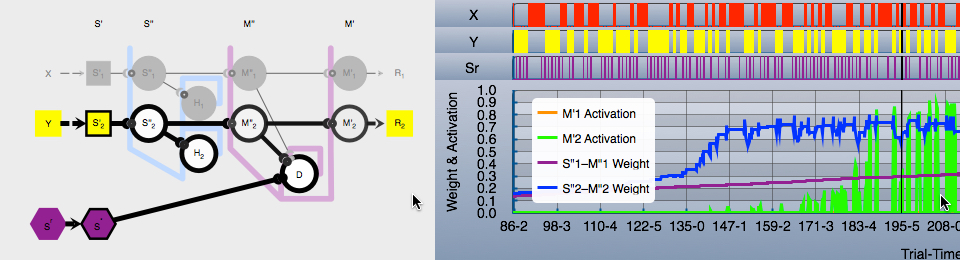

The BGL2015 Visualizer demo/prototype was the publicly viewable warmup act.

Now I’m on to the main act: a cross platform app that will support:

- Creating and editing neural networks.

- Defining experimental procedures to put the network through its paces.

- Define independent and dependent variables and data collection in general.

- Define various types of graphs, charts, tables.

- Run the experiment and collect data, either interactively or “batch mode”.

- Interactively playback the experiment using collected data.

- Etc.

Huge task to take on alone, but that’s what retirement is for. If anyone is interested in helping, at the point where I might possibly be able to use help, I might make this a collaborative open source effort.

Cross Platform

Back in my days at Personal Library Software (PLS), which was acquired by AOL, cross platform meant a wide variety of hardware, operating systems, and language/operating systems versions. Our CPL search software ran on just about any Unixen you can think of, and multiple versions of each. Plus VAX/VMS, the old MacOS (pre-OS X), MS Windows, maybe others. There was talk of an IBM mainframe OS project, but don’t think that ever went anywhere.

That is not what I mean by cross platform now.

For me: cross platform is now MacOS and iOS. That’s it.

Given that Swift is moving to other platforms, perhaps way down the road I’d give a good look to other platforms. I’ll let more adventurous developers have all the fun of working with new Swift ports for now.

First Step: Network Editor

The starting point is, of course, being able to create neural networks.

The next app component will be a graphical editor with a passing resemblance to the animated network diagram in BGL2015 Visualizer. The “model” of the Model-View-Controller (MVC) will operate across MacOS and iOS. The view-controller graphical user interface (GUI) will have to be platform specific. The GUI is similar between MacOS and iOS, but not identical. Then there is the difference in display space available, and interaction styles.

I have done both iOS apps and now a MacOS app. I already know that just making the model operate cross platform will be “interesting”.

A simple example: on MacOS, the CGFloat (Core Graphics Float) type is defined in the Foundation framework. On iOS, it is the UIKit. So I have discovered how to do conditional compilation in Swift (a fairly new feature). I have written C and C++ code that was a mass of conditional compilation; had hoped to never see it again, but it is sometimes necessary.

#if os(OSX) import Foundation #elseif os(iOS) import UIKit #endif

Another example: if a neural network is defined on one platform, including formatted text labels on the diagram, as in BGL2015 Visualizer, the definition must be saved in a format that can be used cross platform. But the formatted text string, NSAttributedString, cannot be archived in a cross platform safe format, at least not according to documentation I have found online. Comments online say that the attributed string does not support the NSCoding protocol, but it does now support the NSSecureCoding protocol. Things are changing as we speak, and online advice often no longer applies.

So: what happens if I passivate an attributed string under MacOS, then activate it under iOS? Does it crash and burn because one platform uses NSFont and the other UIFont? Or does Apple use the underlying common core font, CTFont or CTFontDescriptor (which is what I may have to do) to get cross platform archiving?

That is my current fun: experimenting with archiving/unarchiving attributed text cross platform.

Geez, and that is just for starters before even really defining what the selectionist neural network editor will look like internally.t

Pingback: Swift Playgrounds on iPad? | BASimulation.org